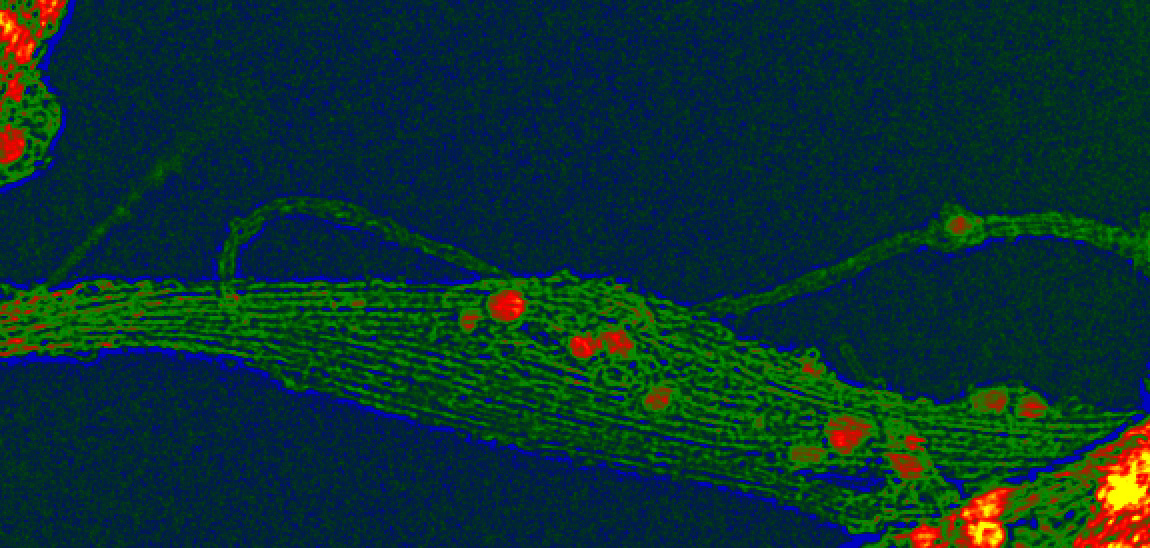

A book club guide for Films from the Future

The same structure that makes Films from the Future ideal for undergrads, also makes it perfect for an extremely engaging book club – one where you not only read a book together, but you get to watch films as well!

Sci-fi movies are the secret weapon that could help Silicon Valley grow up

If there’s one line that stands the test of time in Steven Spielberg’s 1993 classic “Jurassic Park,” it’s probably Jeff Goldblum’s exclamation, “Your scientists were so preoccupied with whether or not they could, they didn’t stop to think if they should.” Goldblum’s...

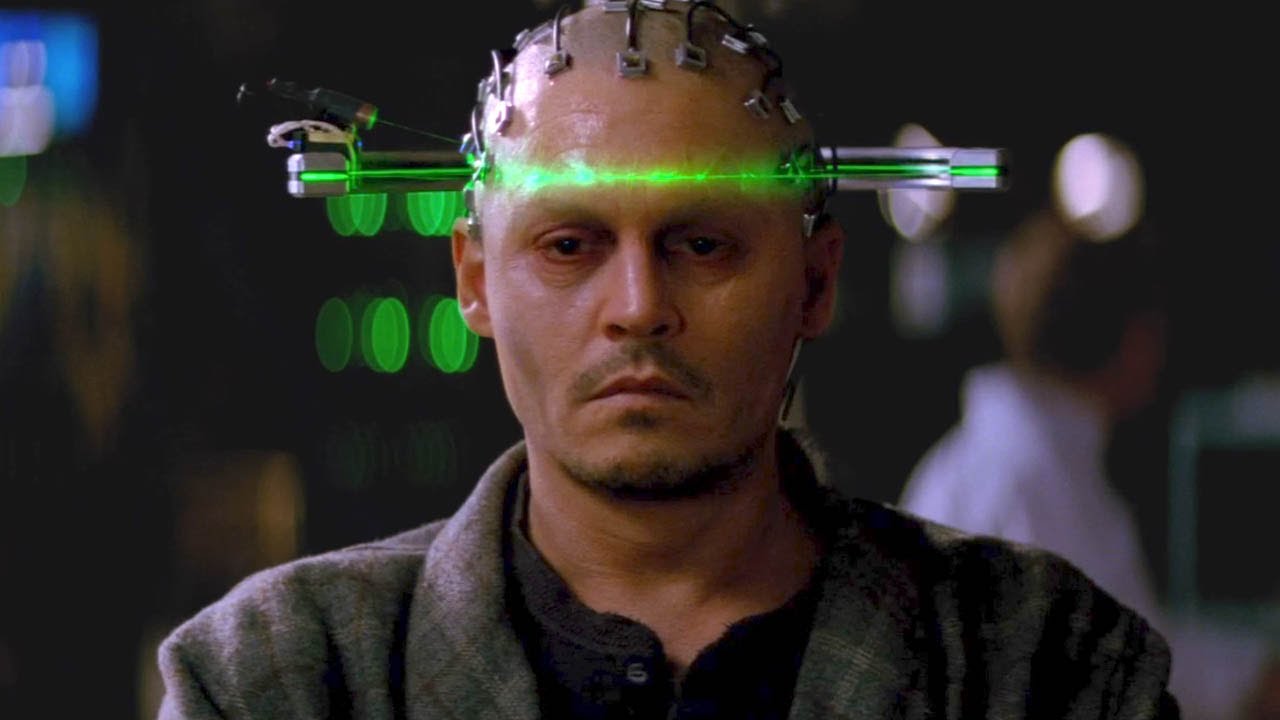

Even “bad” sci-fi movies can teach us something about emerging technologies!

The film Transcendence, is not a great movie. Yet this futuristic thriller, which stars Johnny Depp as a genius scientist who mind-melds with a supercomputer, provides surprising and sometimes startling insights into how future technologies are unfolding, and the moral and ethical challenges they potentially raise.

The Honest Broker meets Dan Brown’s Inferno

Each week between now and November 15th (publication day!) I’ll be posting excerpts from Films from the Future: The Technology and Morality of Sci-Fi Movies This week, it’s chapter eleven, and the movie Inferno. Inferno may seem like an odd choice of movie in a book...